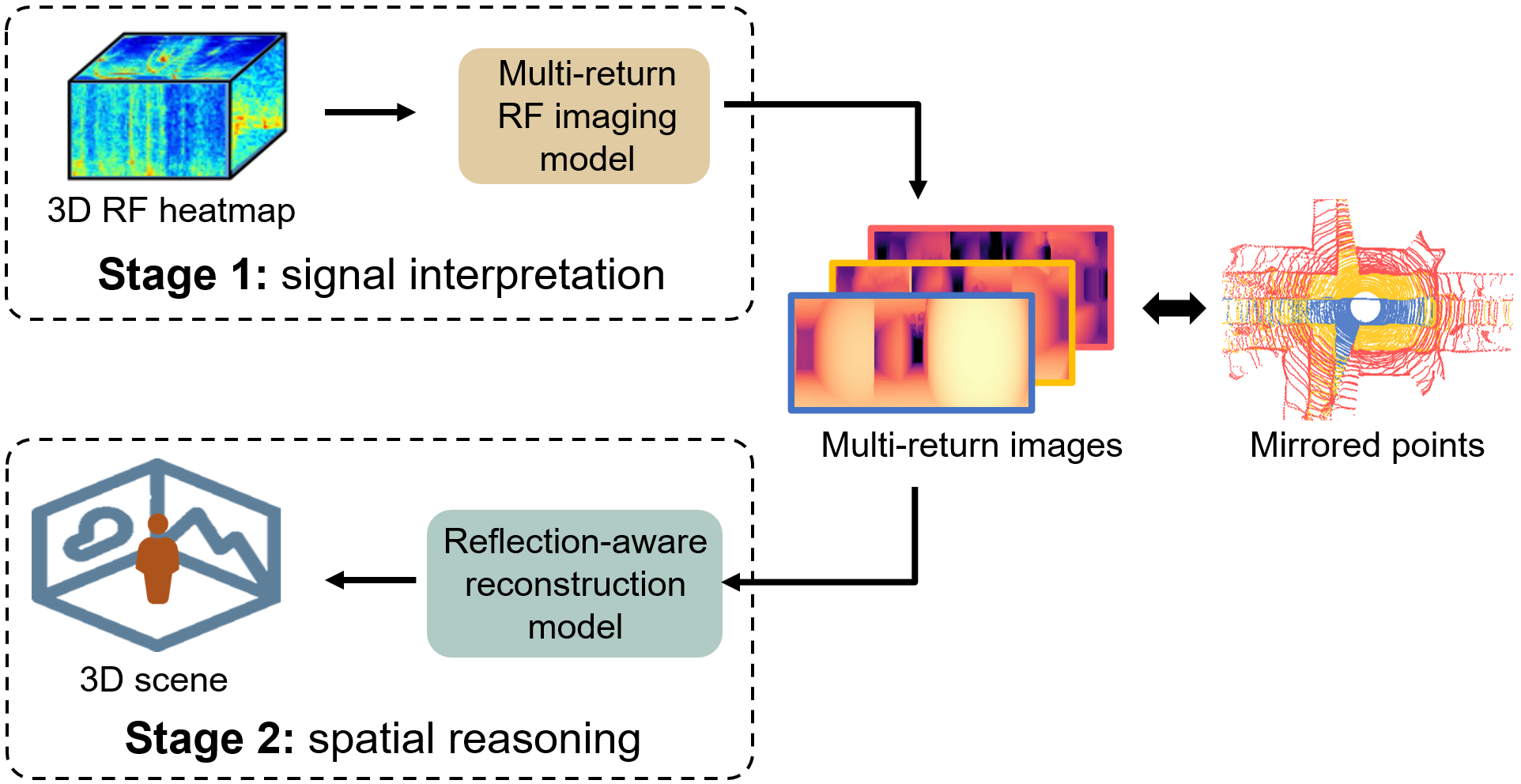

Seeing hidden structures and objects around corners is critical for robots operating in complex, cluttered environments. Existing methods, however, are limited to detecting and tracking hidden objects rather than reconstructing the occluded full scene. We present HoloRadar, a practical system that reconstructs both line-of-sight (LOS) and non-line-of-sight (NLOS) 3D scenes using a single mmWave radar. HoloRadar uses a two-stage pipeline: the first stage generates high-resolution multi-return range images that capture both LOS and NLOS reflections, and the second stage reconstructs the physical scene by mapping mirrored observations to their true locations using a physics-guided architecture that models ray interactions. We deploy HoloRadar on a mobile robot and evaluate it across diverse real-world environments. Our evaluation results demonstrate accurate and robust reconstruction in both LOS and NLOS regions.

HoloRadar uses a two stage pipeline that separates imaging from the mirroring correction.

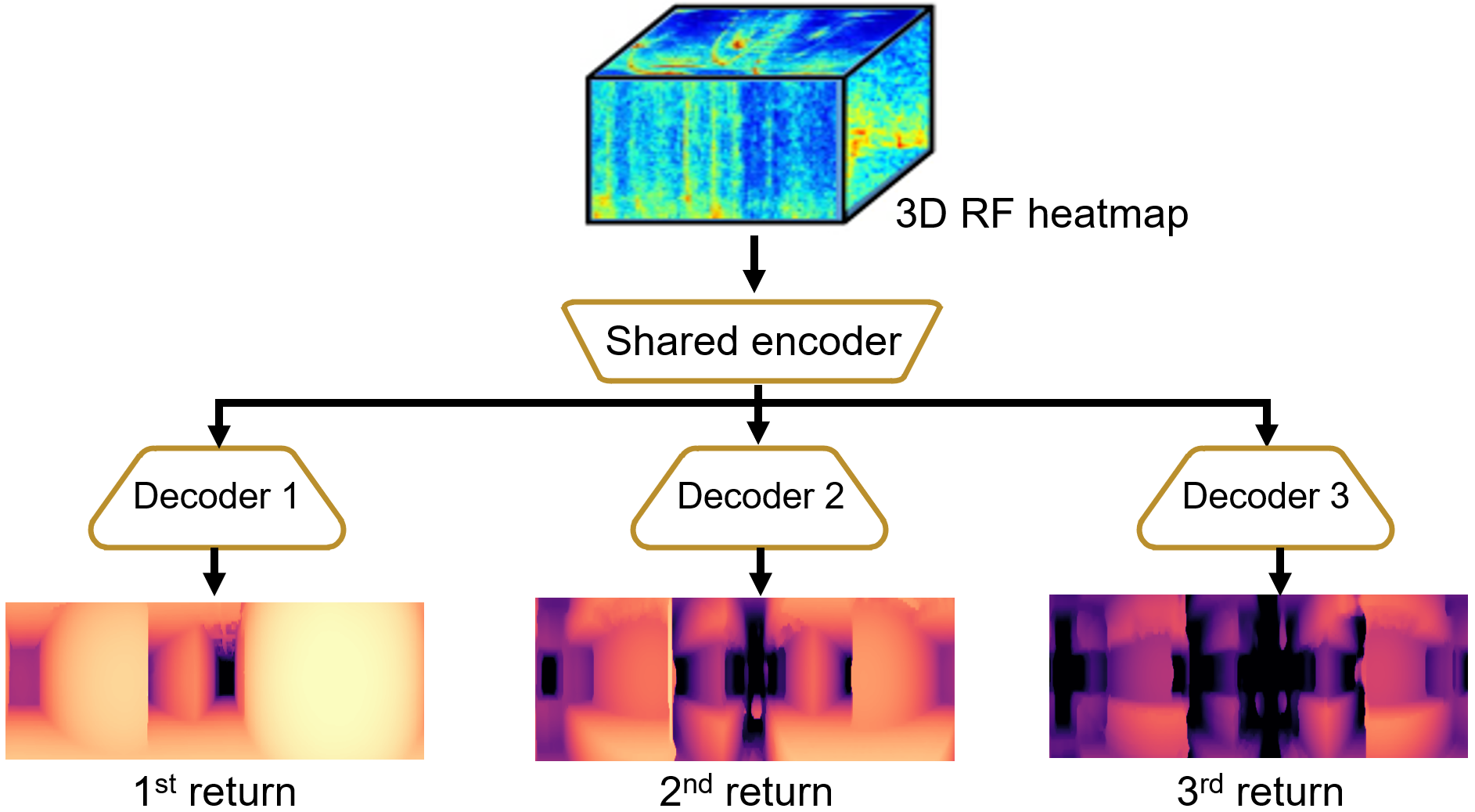

Specifically, we design a shared-encoder but separate-decoders structure for our multi-return RF imaging model. The encoder processes the full heatmap and extracts a unified set of RF features. Then, each of the decoders specializes in predicting the range image for its corresponding return.

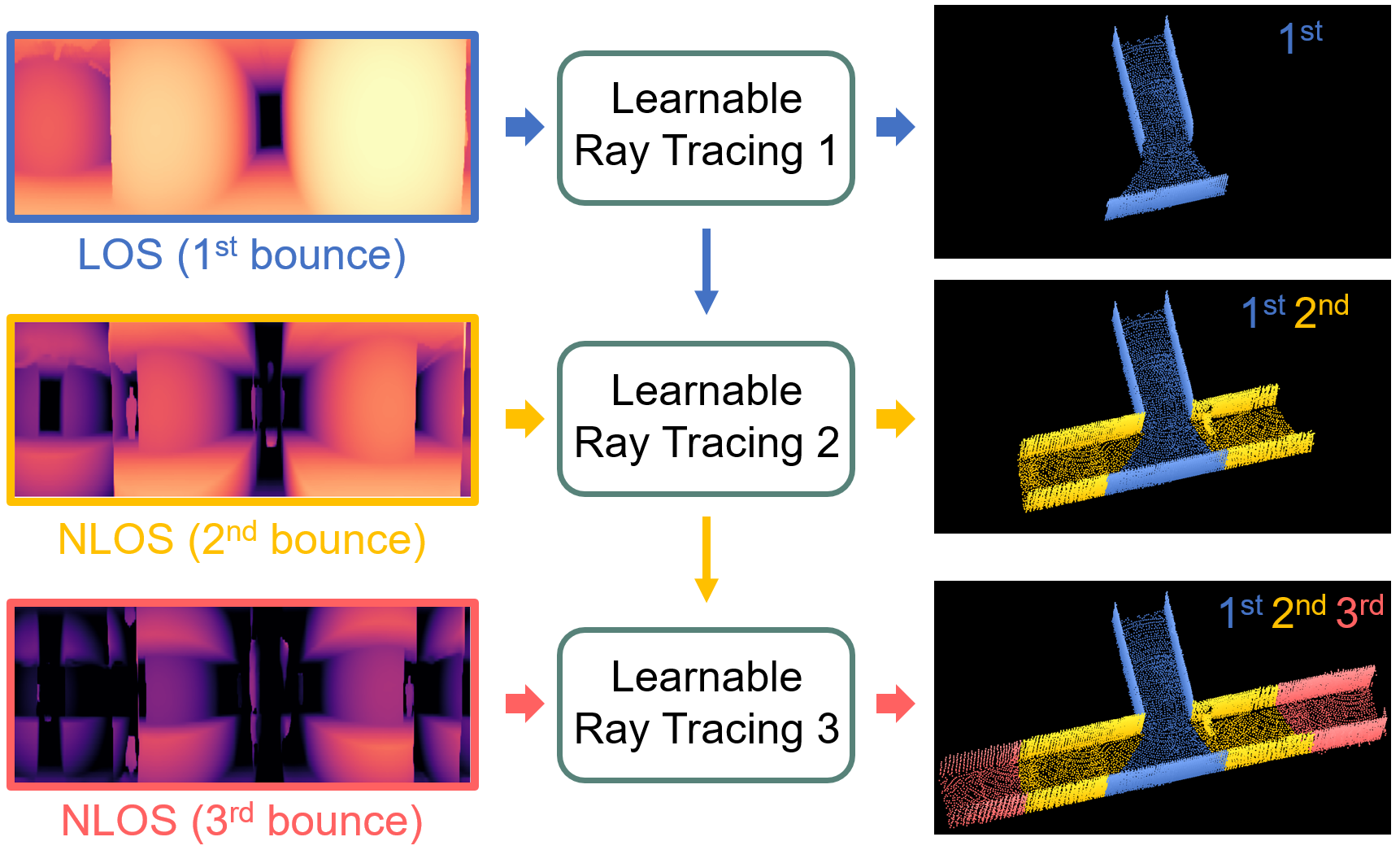

To build the real 3D scene, we use a ray tracing module to track how the signal travels and update where it hits, which helps us recover the real geometry. The tracked locations are then used to determine the reflection path of the next return. By cascading these ray tracing modules together, the reconstruction gradually reveals the hidden NLOS regions. It is worth noting that our ray tracing module does not purely rely on physical modeling, but combines signal processing and learnable layers to mitigate error accumulation during ray tracing.

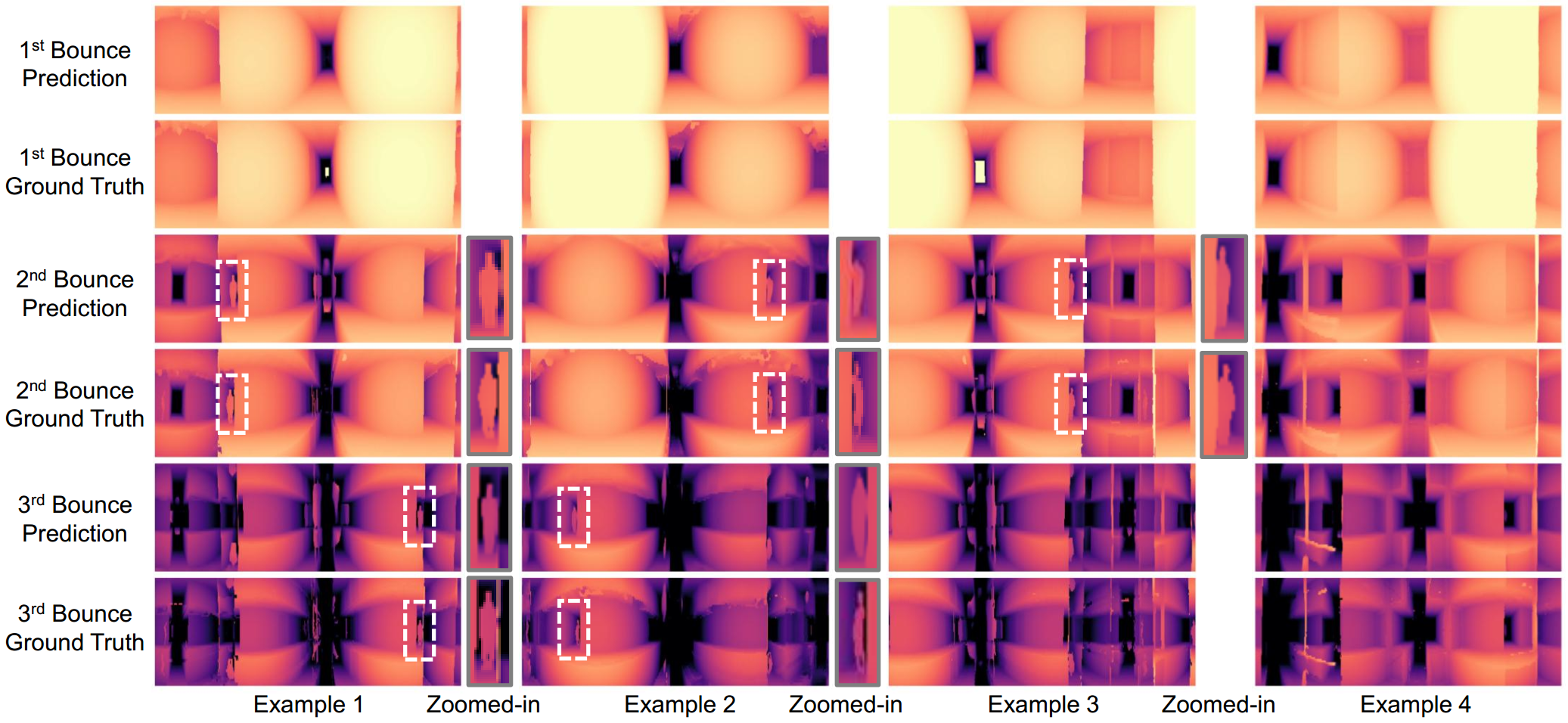

We present the multi-return RF imaging results. The predicted range images align closely with the ground truth. Our model successfully reveals NLOS structures and human (highlighted and zoomed in by white boxes) in both the 2nd and 3rd bounce images.

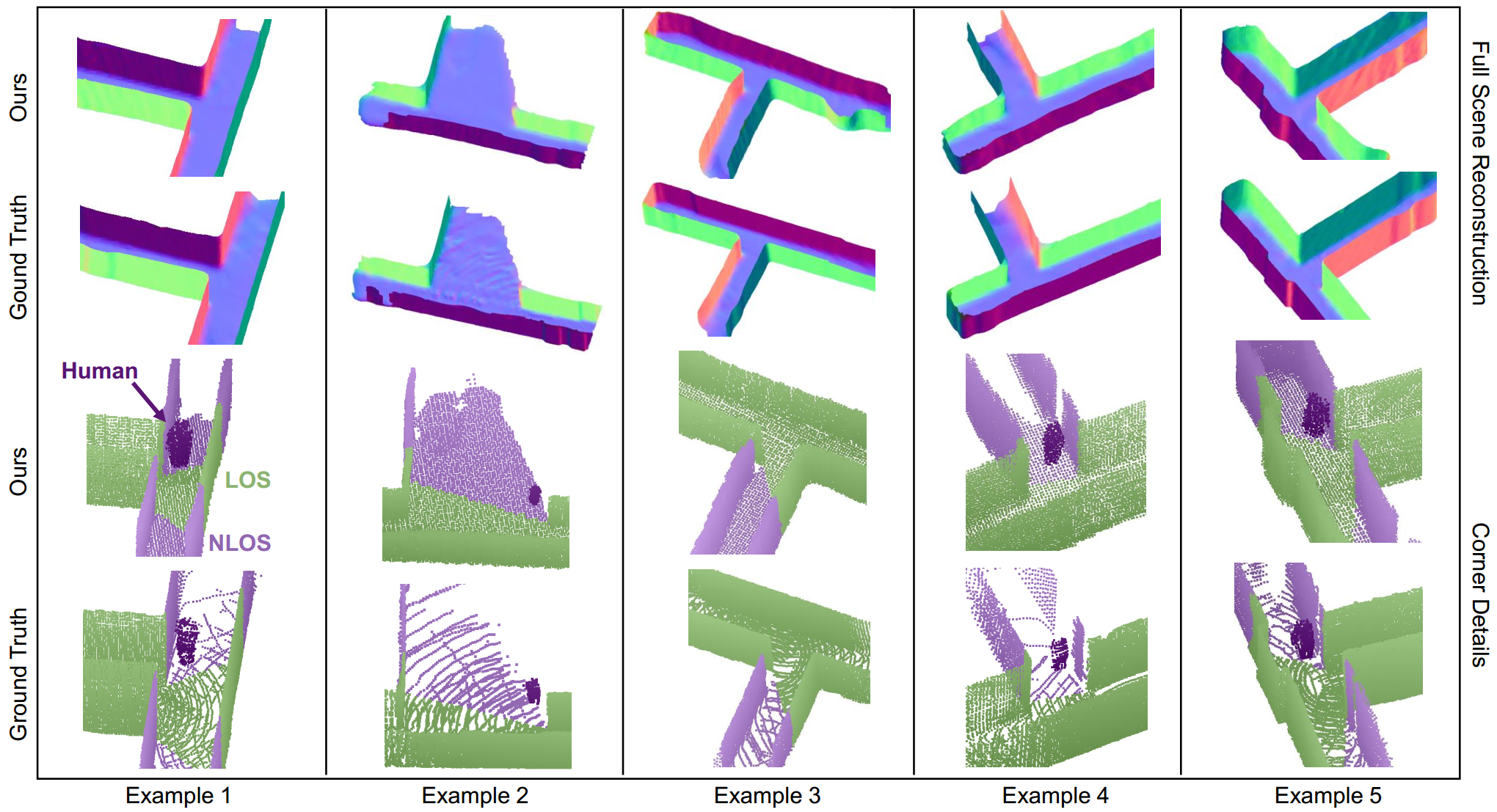

We show the scene reconstruction results. Top two rows are full scene reconstruction, and bottom two rows are detailed views of each corner. Green and purple points represent LOS and NLOS geometries, respectively, while dark-purple points indicate the hidden person. The predicted reconstructions align closely with the ground truth, accurately capturing both hidden humans and surrounding NLOS structures.

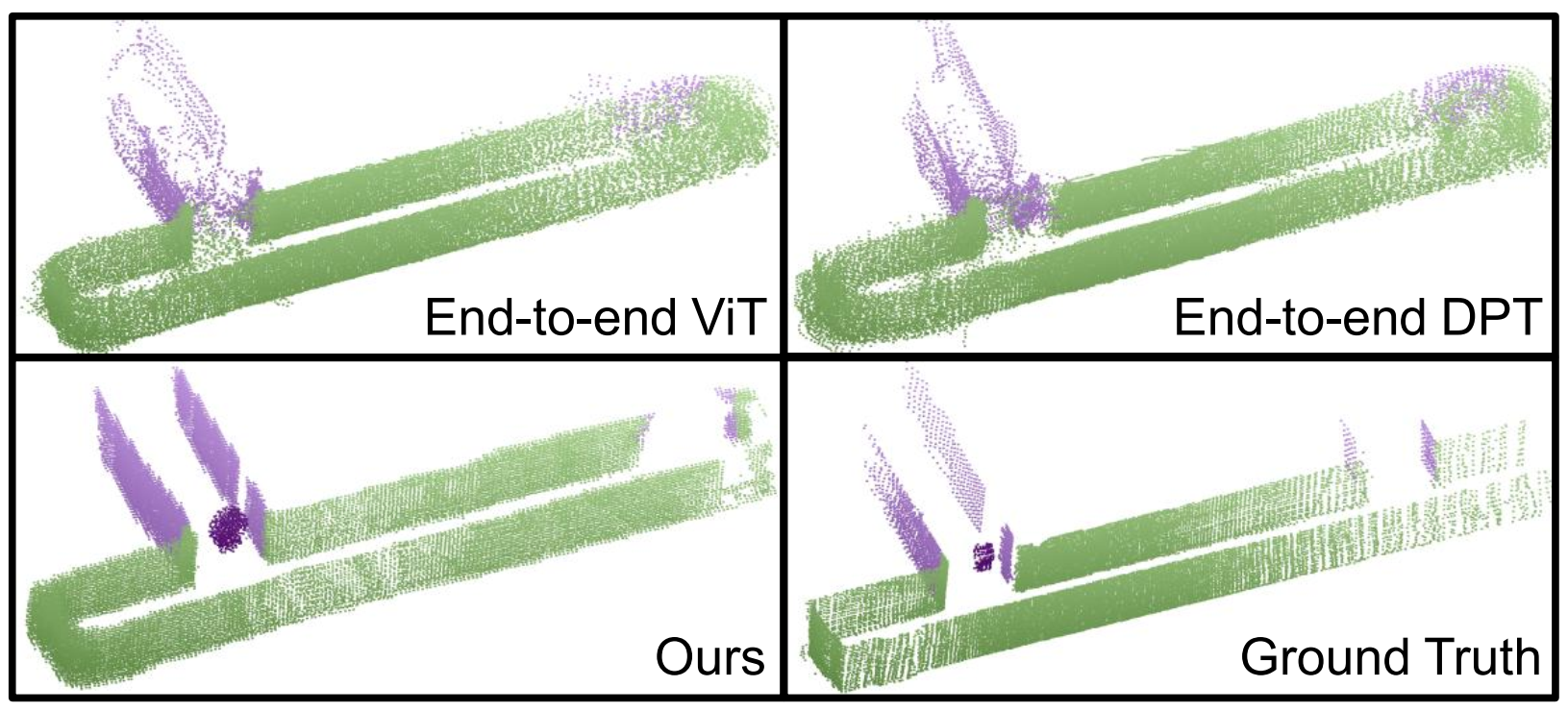

HoloRadar is compared with two end-to-end transformer-based methods. Our approach produces clearer and more complete reconstructions in both LOS and NLOS areas, while the baselines exhibit noisy or incorrect structures (e.g., missing humans or closed corridors).

Example 1: Ours vs. Ground Truth

Example 2: Ours vs. Ground Truth

We collect a dataset from 32 distinct corners across 5 buildings, which are constructed between 1906 and 1996 and renovated between 1973 and 2017. Our dataset includes diverse corner layouts, including 21 T-shaped, 5 L-shaped, 5 cross-shaped, and 1 oblique corner at 45°. Corner width ranges from 1.33 m to 4.63 m, with a mean of 2.16 m and a standard deviation of 0.89 m. In each corner, we positioned a human subject behind the corner, out of the direct LOS, to simulate realistic NLOS imaging conditions. Both the human and the robot are free to move, leading to a total of 28k distinct RF heatmap scans.

We will release code and dataset to facilitate future research in this direction.

If you find HoloRadar method or dataset useful for your work, please consider citing:

@inproceedings{holoradar,

title={Non-Line-of-Sight 3D Reconstruction with Radar},

author={Lai, Haowen and Lan, Zitong and Zhao, Mingmin},

booktitle={Annual Conference on Neural Information Processing Systems (NeurIPS)},

year={2025}

}If our rotating radar design inspires your work, please consider citing PanoRadar:

@inproceedings{panoradar,

title={Enabling Visual Recognition at Radio Frequency},

author={Lai, Haowen and Luo, Gaoxiang and Liu, Yifei and Zhao, Mingmin},

booktitle={Proceedings of the 30th Annual International Conference on Mobile Computing and Networking (MobiCom)},

pages={388--403},

year={2024}

}This work was carried out in the WAVES Lab, University of Pennsylvania. We sincerely thank the reviewers and AC for their insightful comments and suggestions. We are grateful to Zhiwei Zheng for the discussion, and to Running Zhao and Xin Yang for their help in data collection.

This project page template is adapted from Nerfies.